The Great Convergence… Why AI is Shifting from Frontier Science to Global Commodity

Rahul Rajakumar, Junior Research Analyst at Tamil Economic Forum

For the past three years, global discussions around AI have been dominated by a “race to the top.” Investors and policymakers tracked which AI lab (OpenAI, Anthropic, Google, Meta etc) held the crown for the smartest model. That era is now closing.

Model intelligence is converging and deployment costs are collapsing. This is leading to AI rapidly commoditising. The competitive “battlefield” is shifting away from raw model capability and toward the application layer: reach, integration, and trust.

1. The Shrinking Gap

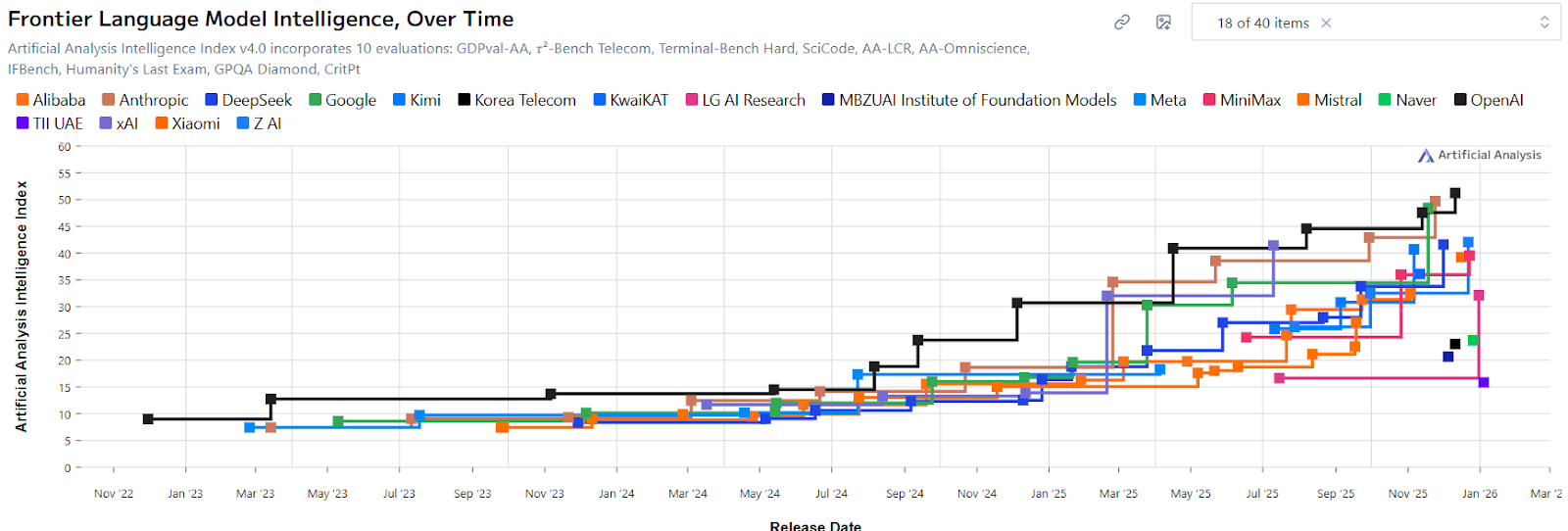

Data from the Frontier Language Model Intelligence Index shows the defining trend for the end of 2025 and start of 2026 is the clustering of top-tier model performance. Early in the LLM cycle, a clear hierarchy existed, with large vertical leaps led mostly by OpenAI. Today, that hierarchy has flattened into a crowded plateau in the top right corner.

While absolute intelligence continues to rise, the lead time enjoyed by any single firm has vanished. Essentially, whenever a new performance ceiling is set, competitors now reach it within weeks or months, not years. The gap between the top models is increasingly marginal.

Intelligence is no longer a scarce resource tied to a single provider; it is becoming a utility. Much like the car industry once moved beyond competing on whether the engine worked, frontier AI has reached a point where the “engine” is assumed. The short-term question is who provides the best vehicle around it: interface, integration, and user experience. The longer-term question is which segment of society (private individuals, enterprise or governments) will drive the volume and speed needed for mass adoption of the “best vehicle”? Essentially put, who will ultimately drive the concentration of value and thus, the “winners”.

2. The Price Collapse

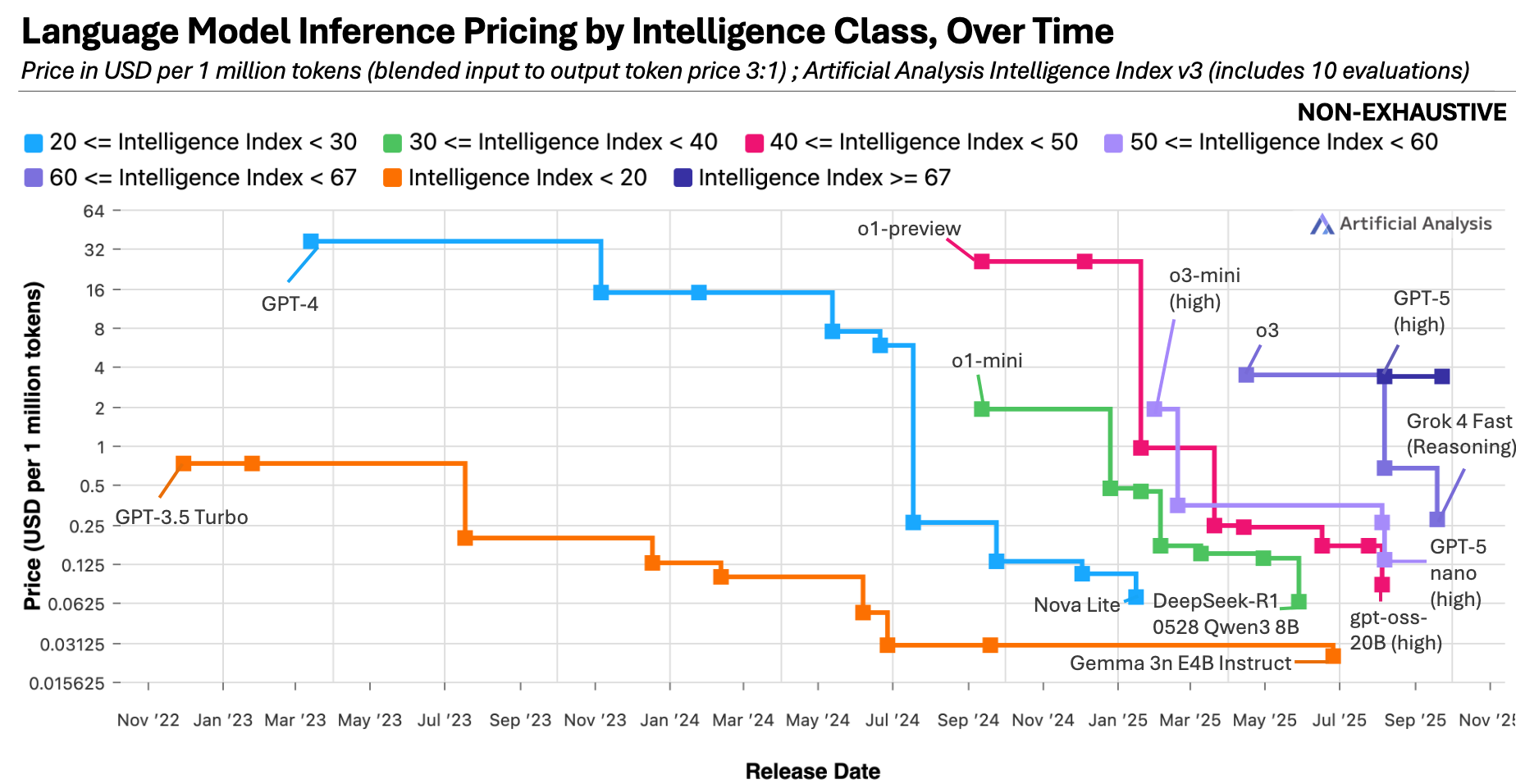

Convergence in performance is only half the story. In parallel, the cost of AI computation is collapsing at an extraordinary rate. Epoch AI data shows that prices per million tokens are falling exponentially, with some performance tiers seeing reductions of up to 900× per year.

This is driven not just by competition, but by hardware breakthroughs. Over the past decade, Nvidia’s latest chips have delivered up to a 105,000× increase in energy efficiency for specific AI workloads. When the cost of a resource falls dramatically, its economic character changes. We do not debate who provides the “best” electricity, we focus on what it enables. AI is entering this utility phase. Frontier-level reasoning is becoming cheap and abundant. The constraint is no longer access to models, but the capacity to deploy them well.

3. Winning the Next Phase

So, if models are commodities, where does value shift?

To the application layer and the surrounding ecosystem. The transition mirrors smartphones. Once hardware and operating systems stabilised, value moved to apps and platform trust. Users choose ecosystems (Google, Apple etc), not processors. The same logic now applies to AI.

This creates new strategic imperatives:

- Context beats capability. AI integrated into legal, medical, financial, or government workflows outperforms generic intelligence.

- Distribution matters more than intelligence. Being embedded where users already are enterprise software, operating systems, devices beats marginal model gains.

- Trust becomes the differentiator. As models converge, reliability, data privacy, compliance, and institutional credibility matter more than novelty. In a commoditised market, the most dependable provider often wins.

Conclusion

The shift from AI-as-science to AI-as-commodity signals maturity. For entrepreneurs, investors and policymakers this reframes the challenge. The key divide is no longer access to powerful models, but the ability to deploy them effectively.

If intelligence is getting cheaper and more universal, the winners will not be those who build the models, but those who integrate them best to solve real structural problems. We are moving past “what can the model do?” and into “what can we do with the model?”

In the age of AI as a commodity, advantage belongs to the integrated, the trusted, and the bold. The endgame remains unwritten and the timeline is far less predictable than most assume.

Sources:

https://epoch.ai/data-insights/llm-inference-price-trends

https://images.nvidia.com/aem-dam/Solutions/documents/NVIDIA-Sustainability-Report-Fiscal-Year-2025.pdf